It is used to built the amazing Google Codelabs Docs. The look and feel of the codelab is very motivating and cativating and will make reader to walk through steps and be able to follow complex guides without hassle.

The ClaaT tool is able to render those kind of docs from two sources:

- Google Docs document

- Markup file

The Google Docs is an almost perfect What You See Is What You Get (WYSIWYG) text editor, and if you mix that with the CLaaT tool, you will get very good solution to be able to present codelabs just as the ones from Google.

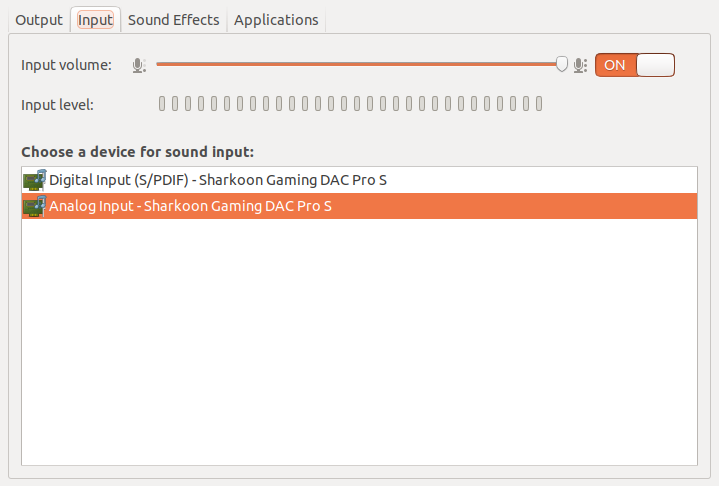

The normal way of rendering the codelabs:

So, the recipe is quite easy:

- Copy a template doc from Google Docs

- Keep the consistency with the template and make the changes that suits your needs

- Run the claat tool (binary or build it from code repository)

- Voilá! You will have your presentation that you can deploy and share on the internet.

That’s it, right?

But wait! There’s more!

The normal way of rendering the codelabs is very straightforward, you can’t get it wrong.

Thought, I had couple of questions:

- Is it possible to render without oauth integration? Not all Google Workspace contract will allow that.

- Can I render it from an offline doc, as I want to save the doc offline as a source of truth?

For all those questions the answer would be a no-go, as the tool would not be able to that for a local html file.

So, I took sometime to look the code and started to wonder the changes needed to be able to render the same codelab project from an offline html source file.

After some troubleshoot I could find the changes needed to be able to do that.

Let me explain those to you:

- The CLaaT tool always assume that a local file will default to the markdown file

- If the tool didn’t find the file, it will query and ask the oauth authentication to have access to the Google Drive document Id.

- After that, the tool will fetch the html and during the execution will fetch all others image dependencies

- Will render the output

That’s it! We mostly had all our work done, as the CLaaT tool will process an export from Google Docs.

So, long story short, for the changes we had to make, they will be 2 changes:

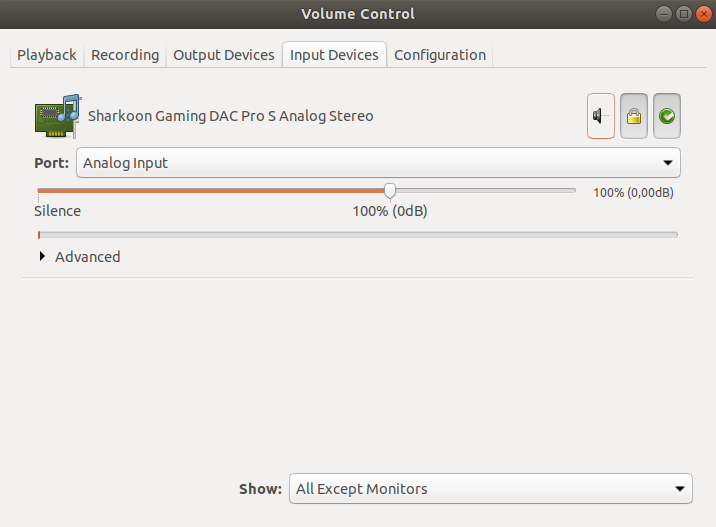

1. On file claat/fetch/fetch.go, you will have to comment some lines for the function:

// SlurpCodelab retrieves and parses codelab source.

// It takes the source, plus an auth token and a set of extra metadata to pass along.

// It returns parsed codelab and its source type.

//

// The function will also fetch and parse fragments included

// with nodes.ImportNode.

func (f *Fetcher) SlurpCodelab(src string, output string) (*codelab, error) {

...

if !isStdout(output) {

// download or copy codelab assets to disk, and rewrite image URLs

var nodes []nodes.Node

for _, step := range clab.Steps {

nodes = append(nodes, step.Content.Nodes...)

}

//err := f.SlurpImages(src, imgDir, nodes, images)

//if err != nil {

// return nil, err

//}

}

...

}

This change will avoid the tool from fetching external images (more on that below).

2. On the same file, we have to do another change, this to define the default local file to be the Google Doc document:

// fetch retrieves codelab doc either from local disk

// or a remote location.

// The caller is responsible for closing returned stream.

func (f *Fetcher) fetch(name string) (*resource, error) {

...

return &resource{

body: r,

typ: SrcGoogleDoc,

mod: fi.ModTime(),

}, nil

}

As you can see, we have changed the default from SrcMarkdown to SrcGoogleDoc.

The steps described before are working for the following commit version:

$ git show-ref HEAD

0f9386372553c3f0570eeca6889c675a74ec0abb refs/remotes/origin/HEAD

To be able to make it easier, please grab this patch and run this command while inside the source folder for the project.

$ patch -p1 < path_to_the_patch_downloaded

How it is done?

The changes we made will allow two things:

- Define the default local file as a Google Doc file

- Bypass the authentication needed to fetch the online doc

TL;DR it will make the tool think the local file is a Google Doc file.

First, as it is a hack, you should build it from source as from last section.

After that, we have the following steps:

- On Google Docs, you have to export the doc to html using the menu File => Download => Web Page (.html, zipped)

- Extract the zip with BOTH: html file and images referenced in the document

- Run CLaaT tool on the extracted html file

- Copy the images directory from the extracted zip into the destination folder (the same that the CLaaT tool output)

With that, you will be able to generate the codelab from the local file, while being able to reference all the images locally.

That is true due to the exported html already had those references and when working on local file we have commented the code that replaces the reference that would otherwise fetch it from Google Docs.

Final thoughts

As you can see, it is not a perfect solution. It will have some steps involved and it may need some update from time to time to keep it up to date.

Aside from that, it works perfectly! It will answer all those initial questions I was asking and It will give me back all the control I wanted over the offline doc.

😀

]]>